Rust Memory Layout Under the Hood

Table of Contents

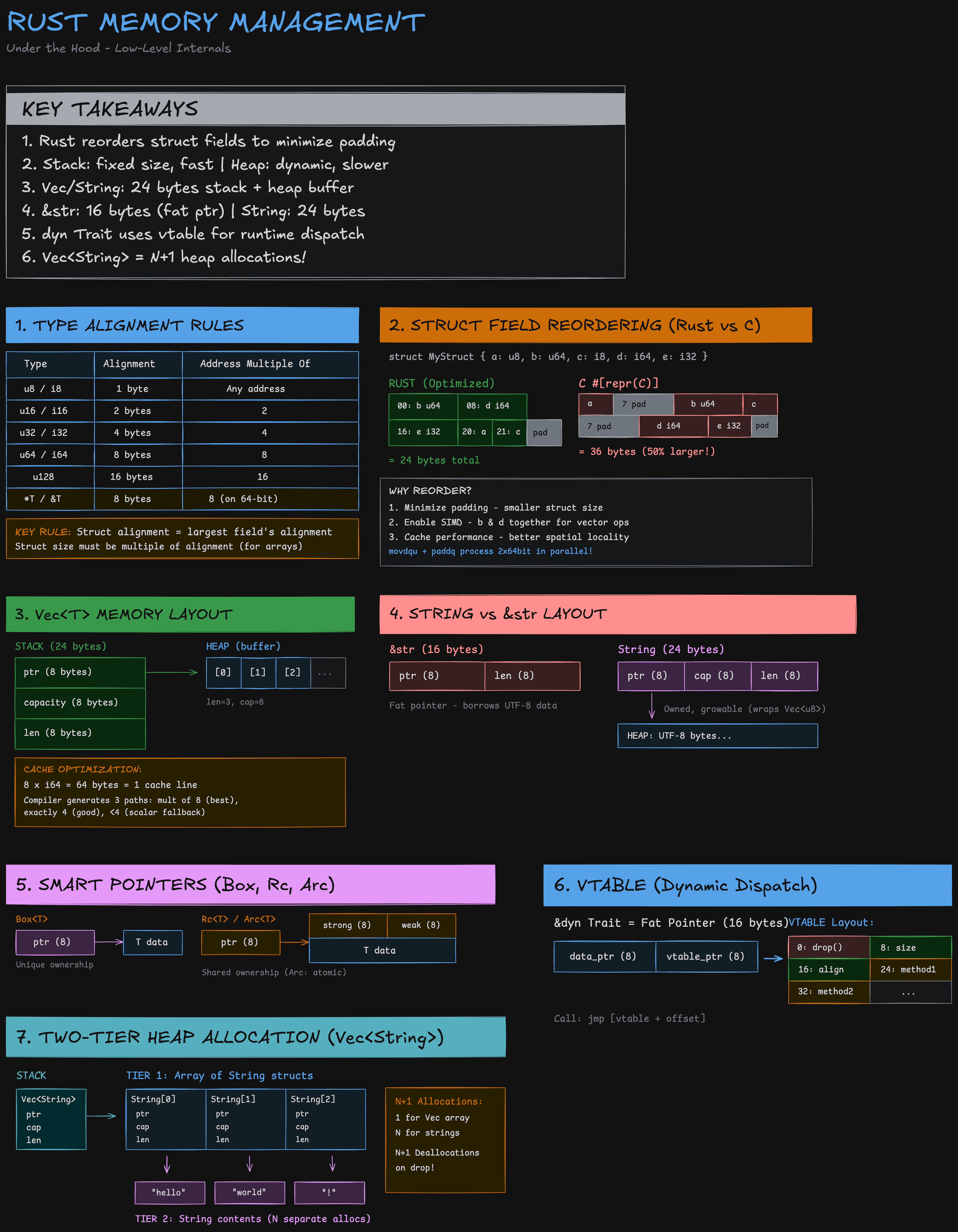

A visual guide to how Rust lays out structs, Vec, String, smart pointers, and trait objects in memory — alignment rules, field reordering, and the byte-level details that shape your program's performance.

Every Rust struct you write is a memory layout decision.

The kind of thing I wish I had a clear reference for when I was staring at perf output wondering why my “simple” struct was blowing cache lines. So I made one.

Most engineers never think about it. But the compiler does.

Struct Field Reordering

Take a struct with fields: u8, u64, i8, i64, i32.

In C with #[repr(C)], that’s 36 bytes. Padding scattered between fields because the compiler must preserve your field order.

In Rust? 24 bytes. Same fields. The compiler reordered them — largest alignment first, packing b:u64 and d:i64 together, then e:i32, then the small types. 33% smaller. And it’s not just about size — putting those two 64-bit fields adjacent enables SIMD vectorization. movdqu + paddq processing 2×64bit in parallel. That reordering is doing more work than most “optimizations” people spend days on.

This is the kind of thing that compounds.

Vec<T> Layout

Vec<T> is 24 bytes on the stack — pointer, capacity, length — pointing to a contiguous heap buffer. 8 elements of i64 = 64 bytes = exactly one cache line. The compiler can generate vectorized code paths when your data aligns to cache lines. Your data layout is literally shaping the assembly.

String vs &str

&str is a 16-byte fat pointer — just borrowing UTF-8 data. String is 24 bytes — same as Vec because it wraps Vec<u8>. Know when you need ownership vs borrowing and you stop allocating for no reason.

The Two-Tier Allocation Problem

Vec<String> is where it gets expensive. Two-tier heap allocation — 1 allocation for the Vec’s buffer of String structs, then N separate allocations for each String’s contents. N+1 allocations total. N+1 deallocations on drop. This is why Vec<&str> exists and why arena allocators matter.

Dynamic Dispatch

dyn Trait — every trait object is a 16-byte fat pointer: data pointer + vtable pointer. That vtable holds drop, size, align, then your methods at known offsets. Every method call is jmp [vtable + offset]. No inlining. No devirtualization. You’re trading static dispatch performance for runtime flexibility, and that’s fine — as long as it’s a conscious choice.

None of this is hidden knowledge. It’s all in the compiler output if you look. But I got tired of reconstructing it every time, so I made the visual cheat sheet above that maps out the actual byte-level layouts — alignment rules, field reordering, Vec/String internals, smart pointers, vtable dispatch, and the two-tier allocation pattern.